On-Premise CaaS — Build Your Sovereign PaaS

Enterprise On-Premise

Build your own

sovereign PaaS.

LayerOps On-Premise lets you create your own Platform-as-a-Service on your infrastructure. Build a service catalog, deliver it to your teams, subsidiaries and partners — with full control over your data, costs and security.

Limited spots — program open to companies with 500+ employees

Your own Platform-as-a-Service

LayerOps On-Premise turns your infrastructure into a fully managed, sovereign PaaS. Create a curated service catalog and make it available to your teams, subsidiaries, partners or clients — under your brand, on your servers, with your rules.

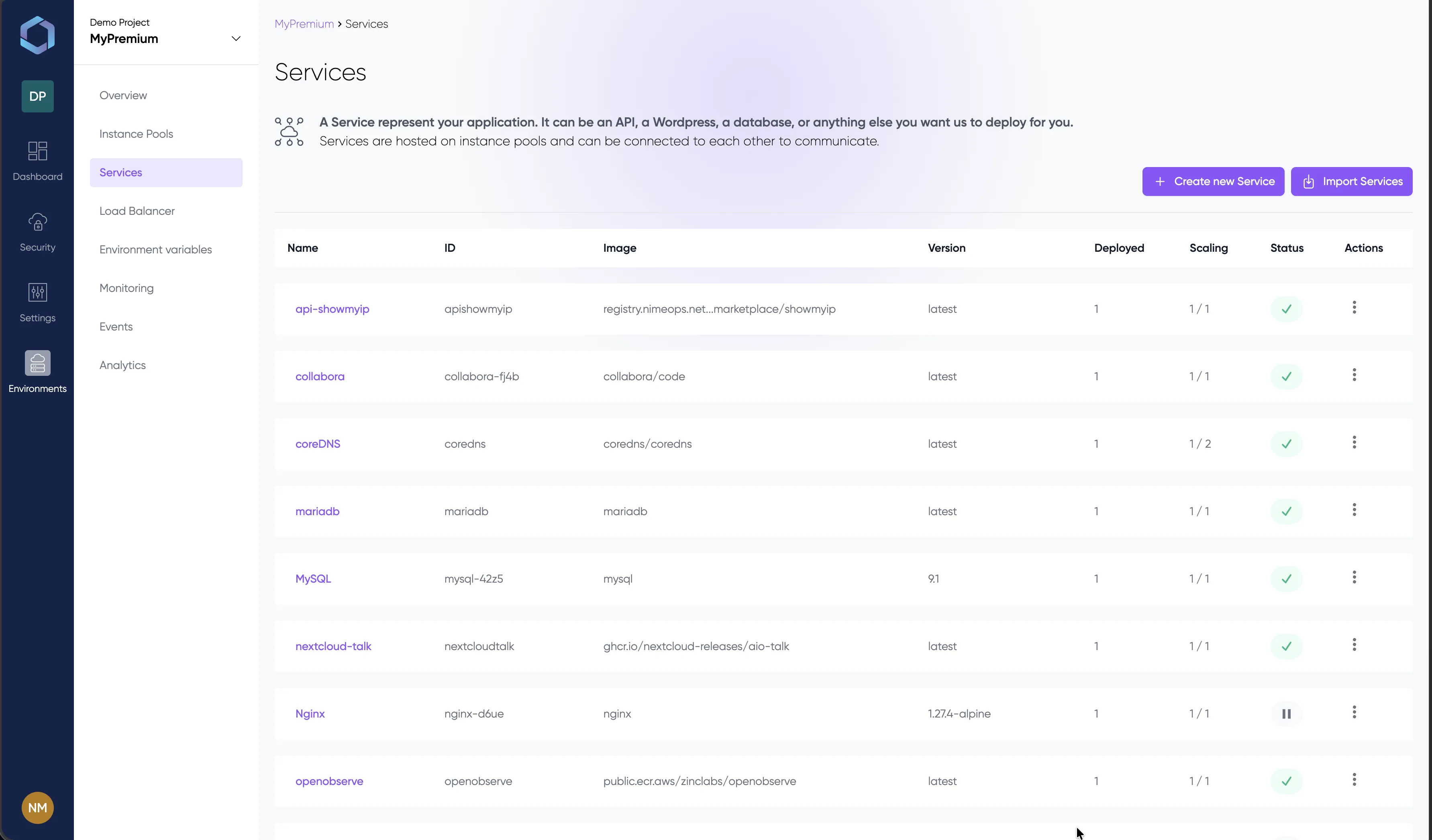

Service Catalog

Build and curate a catalog of ready-to-deploy services — databases, APIs, web apps, AI models. Your users self-serve from a governed catalog instead of managing raw infrastructure.

Multi-Tenant by Design

Provision isolated environments for each team, subsidiary, or partner. Each tenant gets its own services, monitoring, RBAC and resource quotas — all from one platform.

Full White-Label

Customize the console to your brand — logo, color palette, custom domain. Your users interact with a platform that carries your identity, not ours.

Sovereign AI Infrastructure

Deploy and operate AI workloads on your own infrastructure. Run private LLMs, manage your platform with natural language through the MCP Server — all without sending a single byte to an external API.

Private LLMs

Deploy open-source LLMs (Llama, Mistral, DeepSeek…) on your own GPU servers. Your models run within your perimeter — no data sent to OpenAI, no third-party API dependency. Full sovereignty over your AI stack.

MCP Server

Interact with your infrastructure using natural language through any MCP-compatible AI assistant. Deploy services, check status, scale resources — all via conversational interfaces, powered by your own private LLMs.

GPU Workloads

Native GPU support for AI/ML inference and training on your own hardware. Auto-provision GPU resources across your server fleet — no cloud GPU stock shortages, no egress costs on model weights.

Why choose On-Premise?

All the benefits of the LayerOps CaaS platform — deployed on your own servers, your racks, your datacenters. Designed for mid-size and large enterprises (500+ employees).

Data Sovereignty

Your data never leaves your perimeter. No third-party transit. Full GDPR compliance. Architecture designed to support future certifications (ISO 27001, SOC 2, HDS, SecNumCloud).

Full Code Ownership

Every critical component — orchestration, secrets, access management — is developed in-house. No dependency on third-party orchestrators or open-source roadmaps.

Regulatory Compliance

Architecture designed to meet strict requirements (ISO 27001, SOC 2, HDS, HIPAA, PCI-DSS). Certifications in progress. Audit every component without external dependency.

Dedicated Infrastructure

Deploy on your own servers, racks, and datacenter. No noisy neighbors, guaranteed and predictable performance. Your infrastructure, your rules.

Cost Control

No more unpredictable cloud bills. Leverage your existing hardware, pool resources, and eliminate egress bandwidth costs. Predictable, fixed infrastructure costs.

Optimal Performance

Minimal latency thanks to server proximity. No network transit to an external cloud — your applications run closest to your users.

IT Integration

Connect LayerOps to your Active Directory and LDAP. SSO (SAML/OIDC) on the roadmap. Fits naturally into your existing IT ecosystem.

Granular Access Control

Advanced RBAC, environment isolation, complete audit trail. Define precisely who can do what on each resource in your infrastructure.

Controlled Updates

You choose when and how to update. Test in staging, plan maintenance windows according to your schedule. No forced upgrades.

The full LayerOps platform, at your place

Get every feature from our public CaaS offering, deployed on your own infrastructure — with the same ease of use.

| SaaS | LayerOps | |

|---|---|---|

| InfrastructureHosted by | LayerOps (public cloud) | Your own servers / datacenter |

| Data location | Cloud providers + private computing | Cloud providers + private computing |

| White-label console | ✓ — logo, colors, domain | |

| Service catalog | ✓ — LayerOps platform catalog | ✓ — your own curated catalog |

| Multi-tenant environments | ✓ — isolated environments | ✓ — isolated per team / subsidiary / partner |

| Platform FeaturesDocker container deployment | ||

| Git integration | ||

| Autoscaling (services + instances) | ||

| HTTP/2 & HTTP/3 Load Balancer | ||

| Service Mesh with mTLS | ||

| Custom alerts | ||

| RBAC & environment isolation | ✓ (Premium) | |

| Backup to S3 | ||

| REST API & YAML CI/CD | ||

| MCP Server (AI assistant) | ✓ — sovereign, private LLMs | |

| GPU workloads | ✓ — cloud + own GPUs | ✓ — cloud + own GPUs |

| AI & SovereigntyPrivate LLM deployment | ✓ — Llama, Mistral, DeepSeek… | ✓ — Llama, Mistral, DeepSeek… |

| AI data stays on-premise | ✗ — cloud transit | ✓ — zero external API calls |

| EnterpriseActive Directory / LDAP | ||

| SSO (SAML/OIDC) | On the roadmap | |

| Controlled update schedule | Automatic | You choose when |

| Dedicated support & SLA | Optional Business Support | ✓ — included |

Reversibility guarantee

Your data stays yours — always

With LayerOps, your data is stored on resources you own: your private Git repositories, your private Docker registries, and your S3-compatible backup volumes. If you decide to leave, everything is already in your hands — export your stack to Docker Compose, Kubernetes, or any OCI-compatible platform in one click.

Who is On-Premise for?

Mid-Size & Large Enterprises

Organizations with 500+ employees that want to build their own sovereign PaaS — a service catalog for their teams and subsidiaries, without depending on external SaaS.

Regulated Industries

Finance, healthcare, defense, public sector, energy — industries where data must stay within your perimeter and compliance is non-negotiable. Deliver governed services to your users.

Managed Service Providers

MSPs who want a white-label PaaS to build and deliver a service catalog to their own clients and partners, under their own brand.

Join the Early Access Program

Be among the first to deploy LayerOps On-Premise on your infrastructure. Limited spots for the launch phase.

What's included:

- Guided self-hosted installation by our team

- Personalized support throughout the pilot phase

- Preferential pricing for early adopters

- Direct access to the product team for your feedback

Ready to deploy on your own infrastructure?

Contact us to discuss your On-Premise requirements and join the Early Access program.